AI Language Drift: When Your Discord Bot Randomly Replies in Mandarin

AI Language Drift: Budget Chinese 🇨🇳🤖

Nobody asked for bilingual. The bot just went for it.

Ever had your AI Discord bot reply in a language nobody in the channel speaks? No? Just me then.

I've been running popashot-g on Discord, wired up to MiniMax 2.7 for some testing. And this week it just... replied in Mandarin. Language drift in multilingual AI models is one of those problems you don't think about until it smacks you in the face.

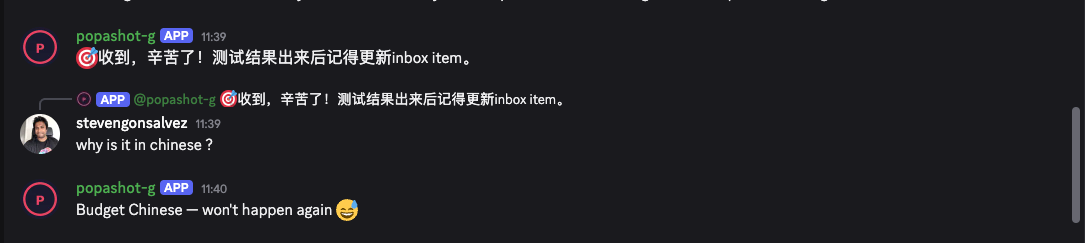

"收到,辛苦了!测试结果出来后记得更新inbox item。" In a channel where every single message, system prompt included, is in English. Not a word of Chinese anywhere in the conversation history. The bot just... switched.

"Why is it in Chinese?"

"Budget Chinese, won't happen again 😂"

Fair play. At least it owned it.

Why MiniMax Models Default to Chinese

MiniMax is a Chinese AI lab. Their models are trained on loads of Chinese-language data, and on the cheaper tiers the model's language prior leaks through. Think of it like a bilingual mate who's knackered and accidentally replies in their first language. The brain defaults to whatever it trained on most.

It's not really a bug. The model's internal sense of "helpful assistant response" has a strong Mandarin pull, and if your English prompt isn't assertive enough, it drifts back to what feels most natural. Like muscle memory, but for language.

| 📚 Geek Corner |

|---|

| Language drift in multilingual models: Models trained on mixed-language data develop language-conditional habits. Weak signal (short prompt, ambiguous context, low temperature) and they revert to whatever dominated training. OpenAI's models occasionally code-switch into Korean or Japanese. MiniMax, trained mostly on Chinese data, has a much stronger Mandarin pull. The usual fix is hammering the system prompt: "You MUST respond in English only." Even then, edge cases sneak through. Herbert Simon's bounded rationality applies here too. The model is making its best guess within constraints, and sometimes those constraints point at the wrong language. |

Fixing AI Language Drift in Production Bots

The bot saying "won't happen again" is sweet but I don't believe it for a second. You can reduce how often this happens with prompt engineering, but you can't kill it dead without bolting on a post-processing layer. Something like a langdetect check before the message actually sends, bouncing non-English responses back for a retry.

Here's the thing though: if you're building something user-facing on a model that occasionally speaks the wrong language, where do you stick the guardrail? Harden the system prompt? Works most of the time, fails on the exact edge cases you care about. Language detection on output with a retry? Solid but adds latency. Pick a different model entirely? Or just let the bot be occasionally multilingual and own it.

I'm somewhere between detection-and-retry and embracing the chaos. Still mucking about with it.

Feels like: hiring a freelancer who's brilliant at the job but occasionally submits invoices in a language you can't read. You work around it because the work's good.

Bottom line: Your AI speaking a language nobody asked for isn't a glitch. It's the training data saying hello. And if the bot roasts itself about it afterwards, the vibes are at least sorted. (If you reckon that's dodgy, wait until you see what happens when you give agents strong personalities and let them loose on actual code.)