Claude Code vs Warp AI: Terminal Coding Agent Showdown

The Showdown 🥊

One shows you a todo list. One shows you a wall of text. Both get the job done. Only one sparks joy.

I gave the same task to Claude Code and Warp's AI: "Run the Postman tests for this API."

What happened next says everything about how different tools interpret "agentic."

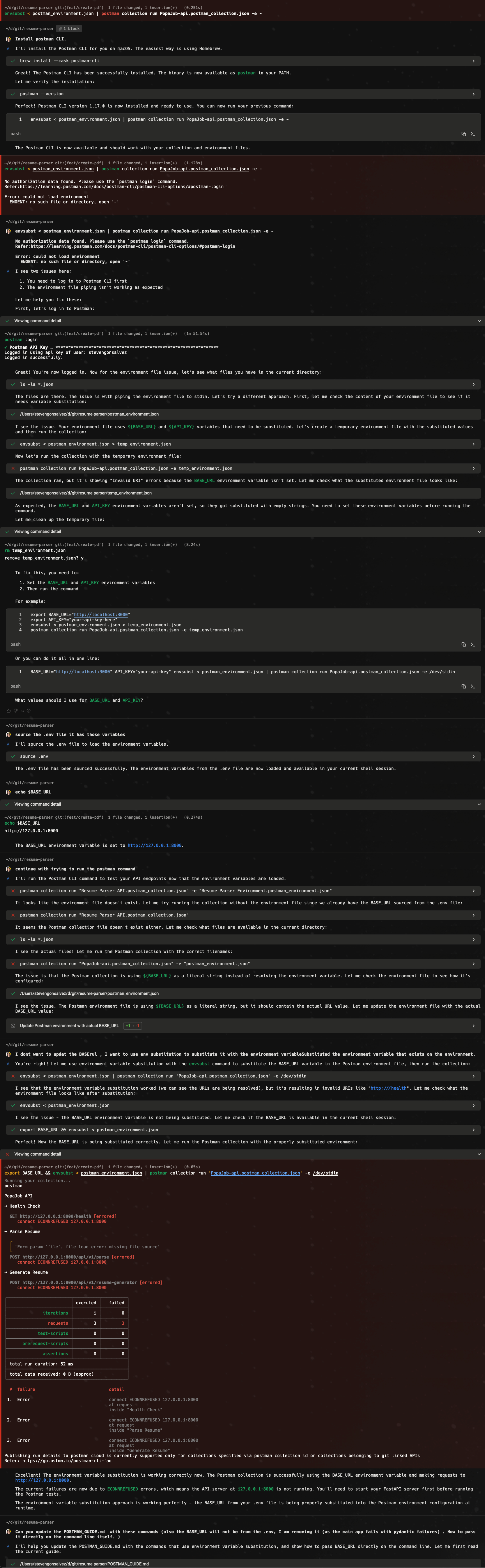

Warp: The Verbose Overachiever

Warp goes hard. You ask for Postman tests, you get:

- A wall of terminal output

- Multiple code blocks

- JSON flying everywhere

- Results... somewhere in there

It works. It proper works. But sweet mother of cognitive load - you're reading a novel when you asked for a short story.

Feels like: Asking a junior dev for a status update and getting a 45-minute presentation with 87 slides.

Claude Code: The Methodical One

Claude Code does something cheeky: it shows you a plan before executing.

- [ ] Check if API server is running

- [ ] Install Postman CLI if needed

- [ ] Run Postman collection tests

Then it ticks them off. One by one. Like a proper todo list.

At the bottom: Test Results Summary

- Health Check: Success (200 OK)

- Parse Resume: 400 Bad Request

- Generate Resume: 403 Forbidden

Smashing. I know exactly what worked and what's broken.

| 📚 Geek Corner |

|---|

| Agentic UX patterns: Both tools are "agentic" in that they plan and execute autonomously. But Claude Code follows what I'd call the "progressive disclosure" pattern - show the plan, execute visibly, summarise results. Warp follows the "stream of consciousness" pattern - show everything as it happens. Neither is wrong, but one requires significantly less yak shaving to parse. |

The Verdict

| Tool | Gets it done? | Easy to follow? | Sparks joy? |

|---|---|---|---|

| Claude Code | Yes | Absolutely | Yes |

| Warp | Yes | Meh | It's complicated |

Warp is the senior dev who solves your problem but explains it in a 2-hour whiteboard session.

Claude Code is the senior dev who solves your problem and sends you a three-bullet Slack message.

Both competent. One respects your time.

Now, a quick theory drop: there's something called the cognitive load principle - the idea that working memory is limited, so interfaces should minimise extraneous processing. Warp makes you do the work of parsing output. Claude Code does that work for you.

Bottom line: In the agentic CLI wild west, showing up with a plan and a summary beats showing up with a firehose of text. Every time.

What I'm Consuming 📚

Latent Space: Claude Code Episode

Been catching up on the Latent Space podcast - specifically their deep dive with the Claude Code team (Cat Wu and Boris Cherny from Anthropic).

What I loved:

The "Unix utility, not IDE" philosophy is just spot on. Boris said something that stuck with me: "Claude Code is not a product as much as it's a Unix utility." That's exactly right. While everyone else is building polished IDEs with seventeen sidebar panels, Anthropic shipped a terminal tool that just... works. Composable. Scriptable. No hand-holding.

And here's the mental thing: 80-90% of Claude Code's codebase was written by Claude Code itself. Let that sink in. The tool built itself. We're properly in the recursive improvement loop now.

Where I'm not entirely sold:

Boris reckons "everything is the model, that's the thing that wins in the end" - basically saying knowledge graphs and specialised memory will get subsumed. I want to believe it, but... the model can't remember what you did last Tuesday if you start a fresh session.

Maybe I'm just yak shaving here, but until we crack persistent memory that isn't just "shove everything into context and pray," I suspect knowledge graphs and external memory systems still have legs. Time will tell.

What won't age well:

The $6/day average spend claim. That's fine for now, but wait until everyone's running Claude Code on their CI pipelines, spawning agents in the background, and burning through tokens on automated refactors. Anthropic's going to need to figure out predictable pricing before enterprises get sticker shock at month-end.

Also, the "agentic search beats RAG" claim. True for code search - grep and glob are unbeatable when you know what you're looking for. But try that approach on a 500-page legal document or a sprawling knowledge base. The RAG haters are overcorrecting.

| 📚 Geek Corner |

|---|

| Agentic Search vs RAG: The Claude Code team ditched semantic search entirely, finding that agentic search (just using grep, glob, find) outperformed embeddings-based RAG. This works because code has structure - function names, file paths, imports. Unstructured knowledge bases? Different story. |

Bottom line on the pod: Essential listening if you're mucking about with AI coding tools. Swyx and Alessio ask the right questions. Just don't take everything as gospel, some of these takes will age like milk. If you want more on why terminal agents are winning, I go deeper on that later. And last week's Gemini CLI fiasco is a proper cautionary tale on the other end of the spectrum.