Your Coding Agent's Best Feature Isn't the Code

Your Coding Agent's Best Feature Isn't the Code

About a year ago I wrote a piece on AI coding assistants, with a follow-up on what makes an AI a great coding partner, covering what was winning the race, where things were heading, and what to spend your money on. A year on, that warrants a status update. I'll keep it tight.

Terminal coding agents won the race ages ago. That question is settled. The IDE-embedded approach had its moment but the terminal-first agents run circles around them now. And at the top, it is basically a two-horse race: Claude Code and Codex. Everything else is doing its best to close the gap, and fair play to them, some are getting genuinely good - Copilot, opencode, ampcode, Factory, Warp, Qwen Code, Kiro are all pushing hard and I would not bet against any of them in 12 months. And then there's Gemini CLI, which seems a bit... lost. Like it showed up to the party in last season's outfit and hasn't quite clocked that everyone's moved on. But right now, the two I reach for are Claude Code and Codex.

Here's the thing though. At this point, it's not about producing code. All of them produce code at roughly the same level. It's 80% the model, 20% the harness. If GPT-5.4 or Opus 4.6 or Trinity is doing the thinking, the output quality is going to be roughly comparable regardless of which terminal wrapper is sat on top. This is Eclipse, NetBeans, VS Code, IntelliJ all over again. All of them compiled Java just fine. But VS Code won that battle and IntelliJ had its moments too. The difference was never the compiler.

Why Claude Code Keeps Getting My Money

Now, a lot of the love Claude Code gets online - the "I can't go back" posts, the "this changed how I work" threads - gets attributed to its ability to produce code for your requirements. Codex does that just as well, probably Copilot too at this point. Or the autonomy stuff. Opus as a model is probably the main driver there, not Claude Code the harness itself.

The actual reason, the one nobody writes blog posts about because it's harder to screenshot, is purely the developer experience.

As much as Claude Code has become a bit of a learning curve (I've seen courses going for $99 to learn Claude Code - save your money, spend that on your sub instead, seriously), once you're past the faff the tool is an absolute developer's dream. And it comes down to three things.

Their devs are actively getting feedback and acting on it. They're dogfooding it daily. They build Claude Code using Claude Code and it shows in every decision they make. You can feel when a tool is built by people who actually use it versus people who watched a demo.

They ship at a pace that gives you FOMO. Not the bad kind. The "oh they shipped something new and I need to go try it right now" kind. The tool is different every week and you want to keep up. It's mental. In a good way.

And they build stuff so extensible and personalisable it borders on absurd. Like, properly absurd. You can run arbitrary shell commands in hooks, script your own statusline, build your own pet, the lot. The ceiling is just your innovation and how deep down the rabbit hole you're willing to go.

A lot of these devX primitives are things we build on top of in wololo - the agent intelligence platform that sits across harnesses. But the raw ingredients come from the harness itself, and Claude Code's ingredients are just better.

So even though I use Claude Code differently now (most of my interaction is hermes agents spinning up through the custom harness platform and driving it autonomously via claws and hermes, less human in the loop to enjoy the devX), and they don't even support third-party harnesses anymore, I still keep the Max subscription running. The devX alone is worth it for the sessions where I do sit down with it directly.

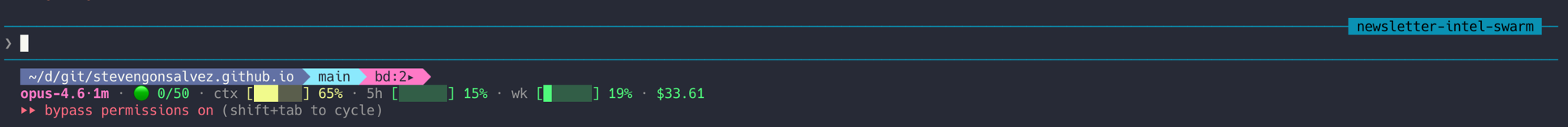

The Statusline: Your Cockpit

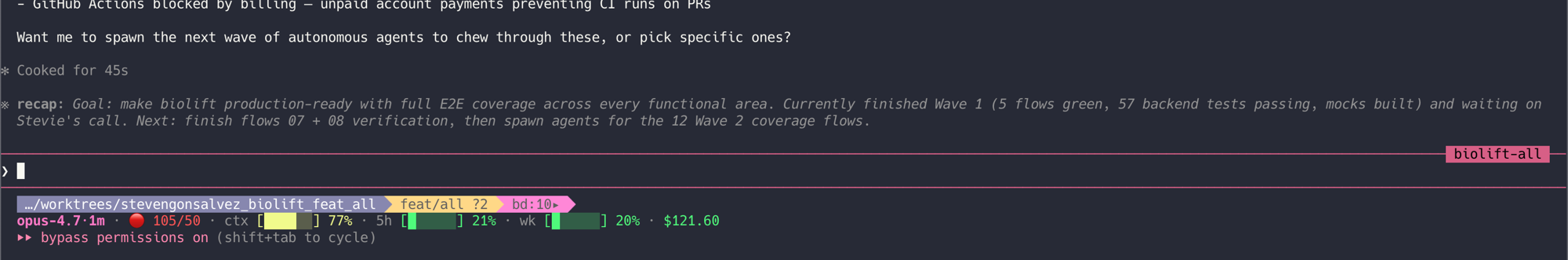

The first thing you see when you start a session. Two lines at the bottom of your terminal that tell you more about your session state than most dashboards manage in a full page.

Line one (powerline bar):

- Working directory in blue

- Git branch in cyan (goes orange when dirty), with ahead/behind counts and staged/unstaged markers

bd:2telling me I've got two beads tasks ready

Line two (plain text):

- Model with the 1m context suffix

- Turn count as a traffic light (green/yellow/red as you approach 50)

- Context percentage bar

- 5-hour usage bar, weekly usage bar

- Session spend in dollars

At a glance: how far into context you are, how much you've burned today and this week, what it's costing, whether your git state is clean. No switching tabs, no running commands, no checking a web dashboard.

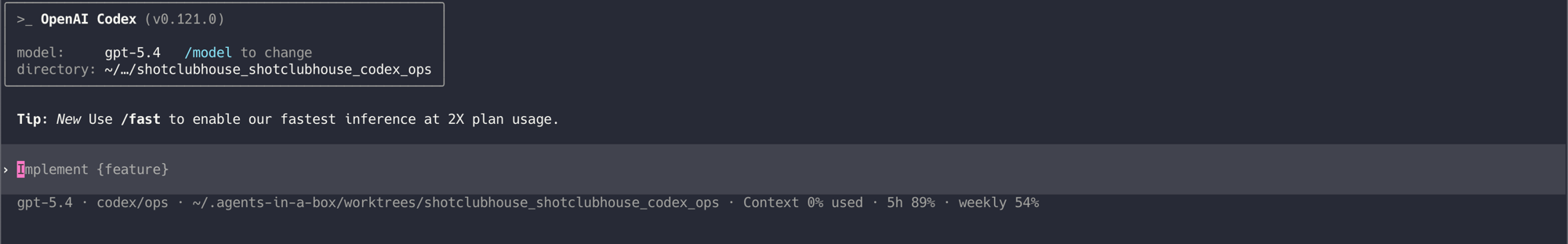

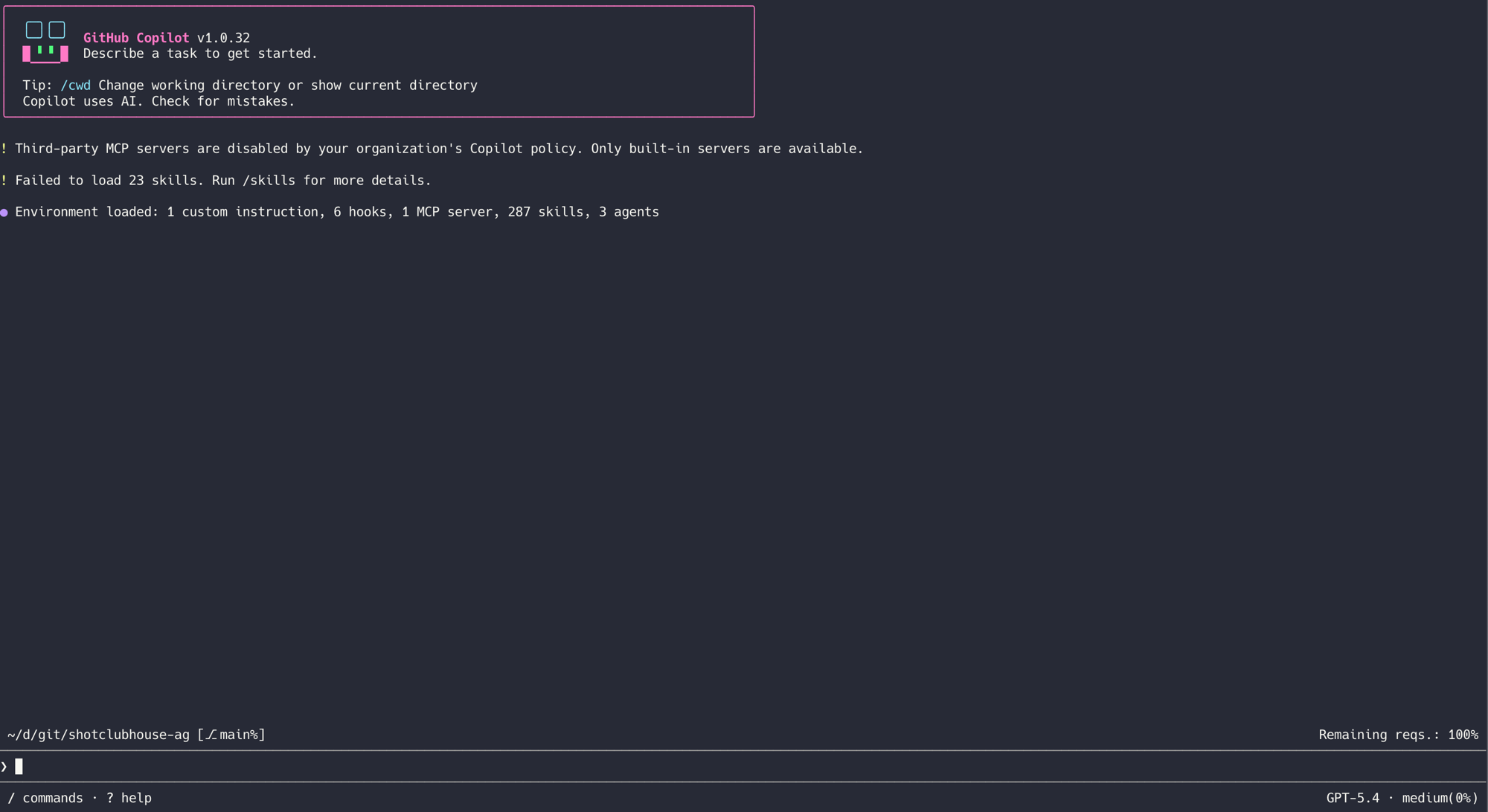

Now look at the competition:

Codex: model, working directory, context percentage, usage bars. Functional. A speedometer on a car without a fuel gauge.

Copilot: model name, remaining requests (as a percentage with no denominator), working directory, git branch. It's like being told you have "some" petrol left.

And here's the kicker - the whole statusline is scriptable. You can actually run another Claude command as part of your status to keep your main session in line with your original prompt, like warn you if Claude is digressing. Other tools are just now getting a statusline... and it's under EXPERIMENTAL flags.

The Stuff Everyone Else Is Playing Catch-up On

Right, digression aside, let's crack on with the bits and bobs that everyone else is playing catch-up on. Because beyond the statusline, Claude Code is operating in a different postcode entirely.

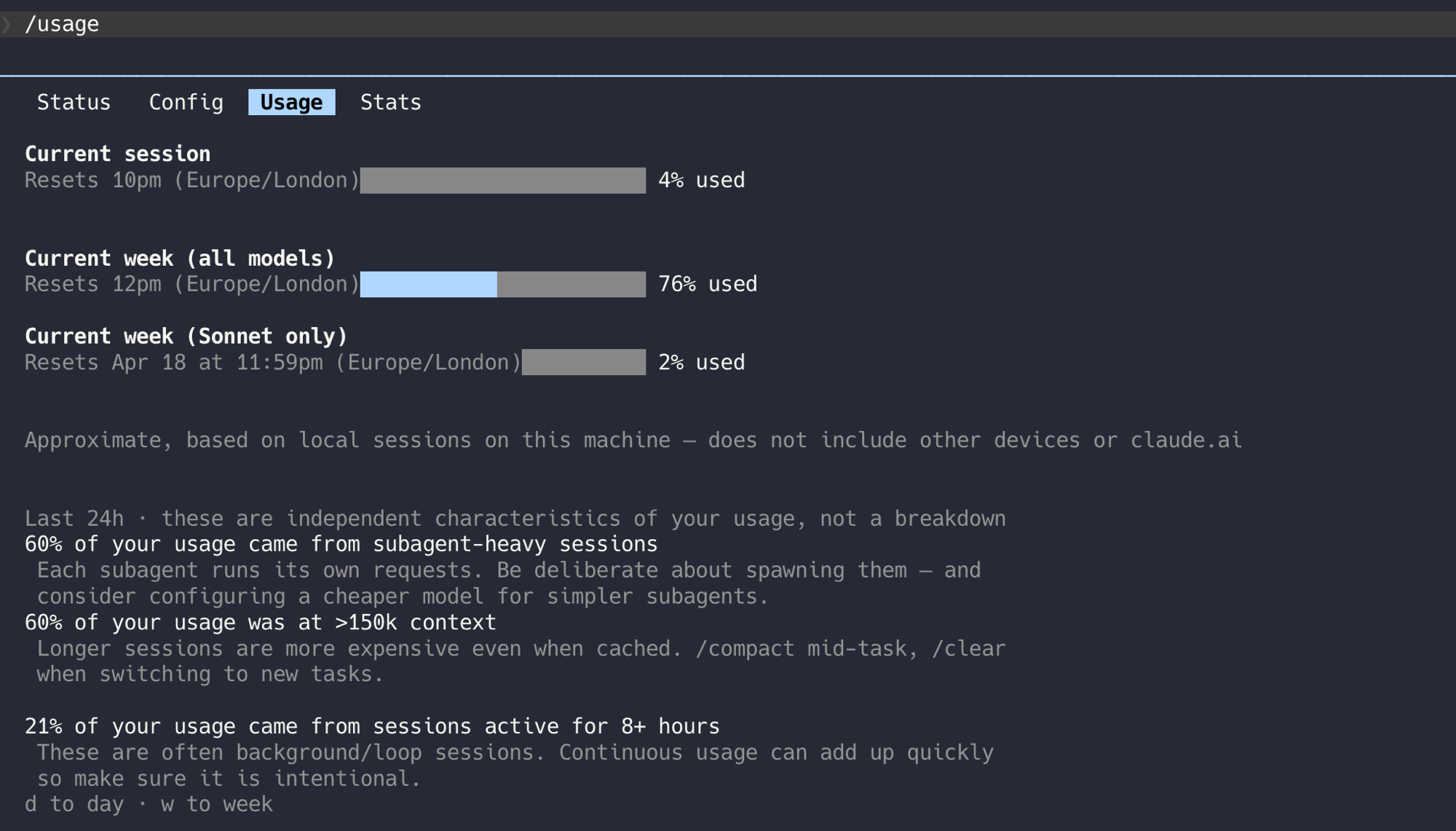

/usage with actual insights. Most tools give you a bar chart and call it done. Claude Code's /usage tells you what percentage of your usage came from subagent-heavy sessions, what ran at over 150k context, what came from sessions active 8+ hours. It's telling you why your usage is what it is, not just what it is.

60% of usage from subagent-heavy sessions. 68% at over 150k context. 21% from sessions active 8+ hours. Now I know where the tokens are going and can make real decisions about it. "Maybe don't spawn four subagents for a one-file fix" is usable advice that falls straight out of this data.

/btw for parking thoughts. You're in the middle of a complex refactor and you think "oh I should also fix that other thing." In any other tool you either interrupt your current flow or forget about it. /btw lets you park that thought without breaking the agent's current task. Picks it up when the current work is done. Tiny feature. Absolute doddle to use. Changed my workflow more than I expected.

/loop and /monitor. Run a prompt on a recurring interval, or stream events from a background process. Want to check your deploy every 5 minutes? /loop 5m check the deploy status. Want to watch a build? /monitor. These aren't complex features individually but having them built in, tested, and reliable tells you something about how the team uses their own tool.

/remote-control. Continue your session from your phone. Not through Termux or vibetunnel or jittery mosh tunnels. Properly, through the Claude Code app. And recently this has been as reliable as your morning alarm - which, unlike most software analogies, is actually a compliment.

/web-setup and /teleport. Port your session to execute somewhere that isn't your machine. When your Mac is burning up and the session is fairly independent stuff, just teleport it. Fair play, Codex had the web execution thing first with Codex Web. But having it integrated into the flow as a natural action rather than a separate product is different.

Recap on resume. When you come back to a session after context compaction, Claude Code shows you what happened. What was accomplished, what's in progress, what's next. You don't scroll through 200 messages trying to reconstruct where you were.

Multi-session orchestration. Four agents running in parallel, each with their own full statusline, each in their own tmux pane. You can see at a glance which one is burning context, which one is idle, which one has dirty git state.

/batch. Need to migrate 100 repositories from axios to superagent? This is the one. Yeah, packaged skills do similar things - beads with swarm orchestration, or agents-in-a-box (the agents-in-a-box write-up covers the architecture in detail) which is what I use for multi-agent coordination. But having it built in tells you the level of dogfooding going on here. These aren't theoretical features cobbled together from a product roadmap. They're building the features they needed yesterday.

/simplify. Brilliant. Reviews your recently changed code for:

- Reuse - spots places where you're reinventing something that already exists in the codebase

- Quality - flags overengineering, unnecessary abstractions, dead code

- Efficiency - catches redundant work, bloated patterns

One command. Done. Pair it with your own critique skill and the design distillation from impeccable, and it absolutely gets the adipose off your code.

/ultraplan. I'll be honest, this one's a bit janky. If you've already got good /research and /plan skills beyond what Claude provides out of the box, /ultraplan doesn't add much. But fair enough, it's there and it's improving.

/insights - This Is Where It Gets Proper Good

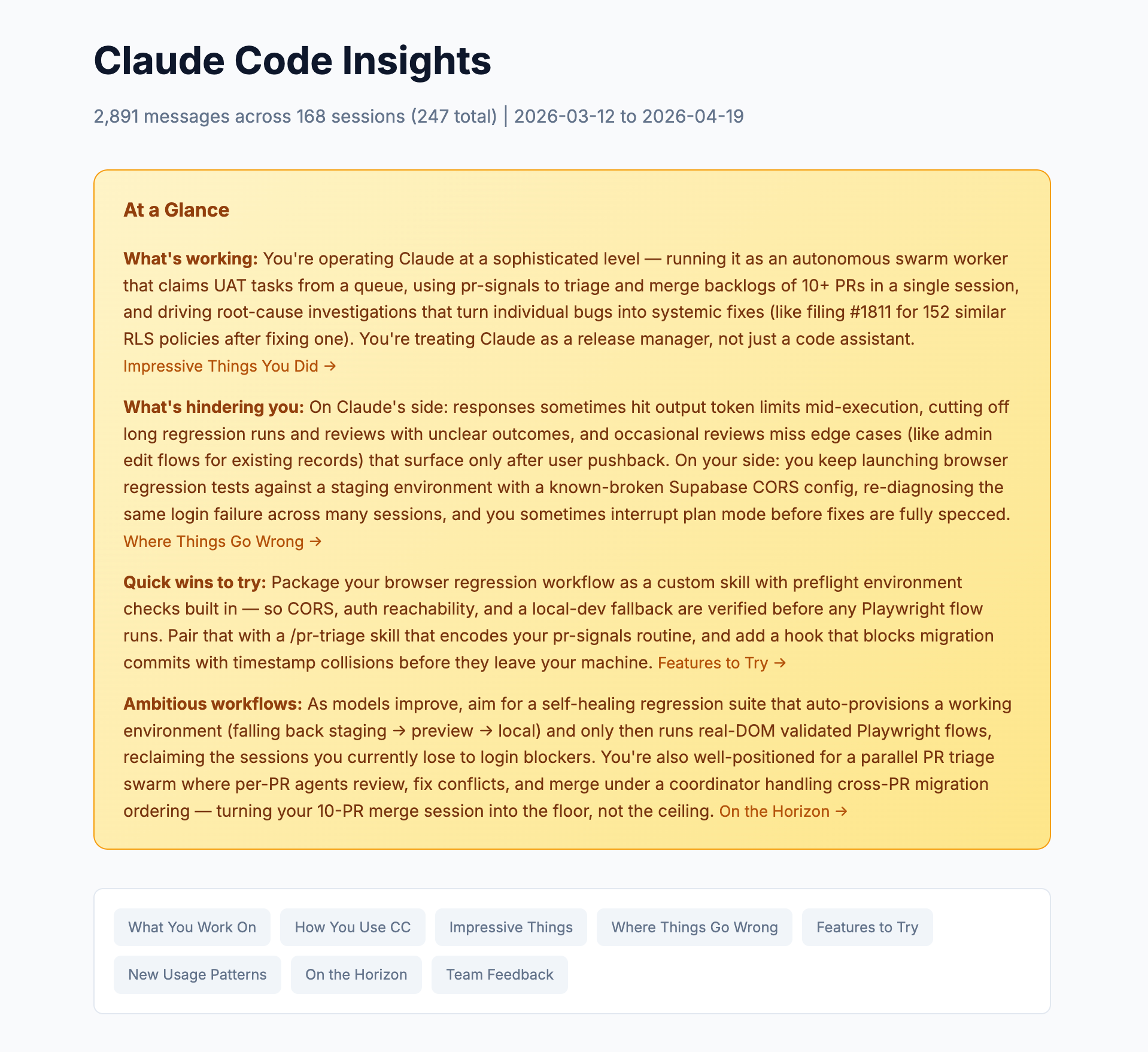

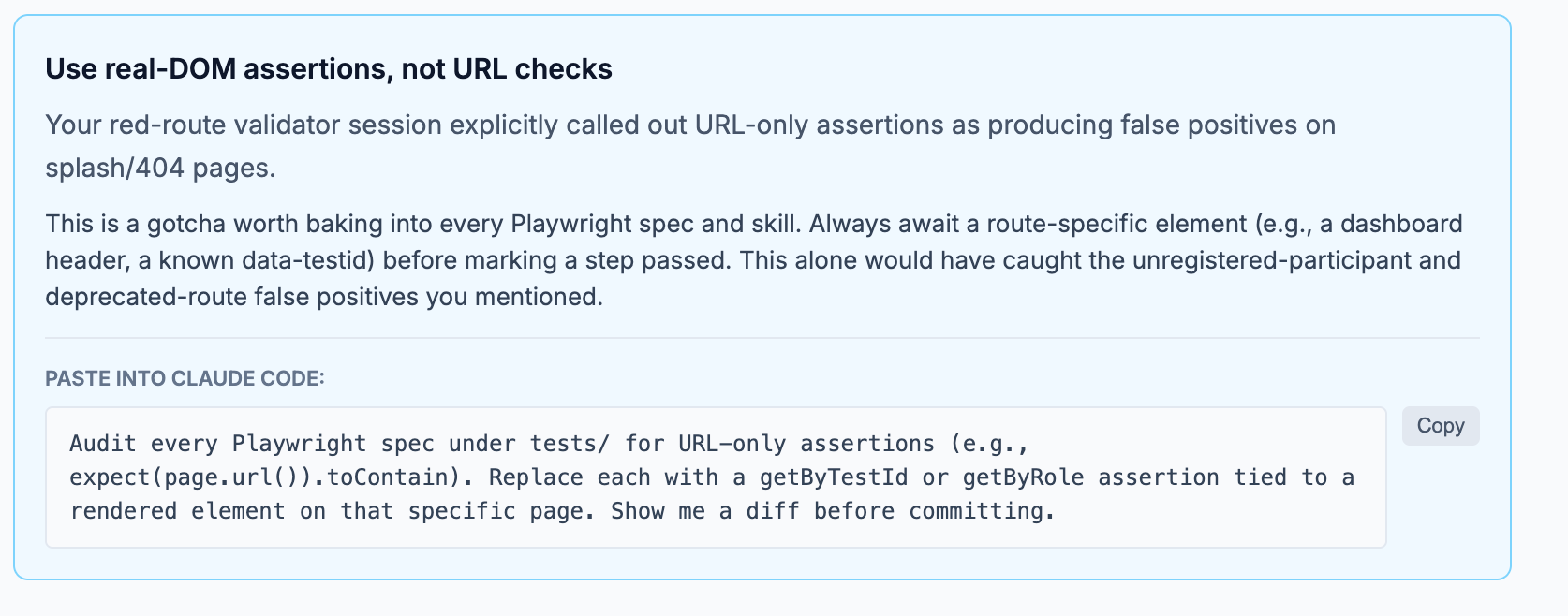

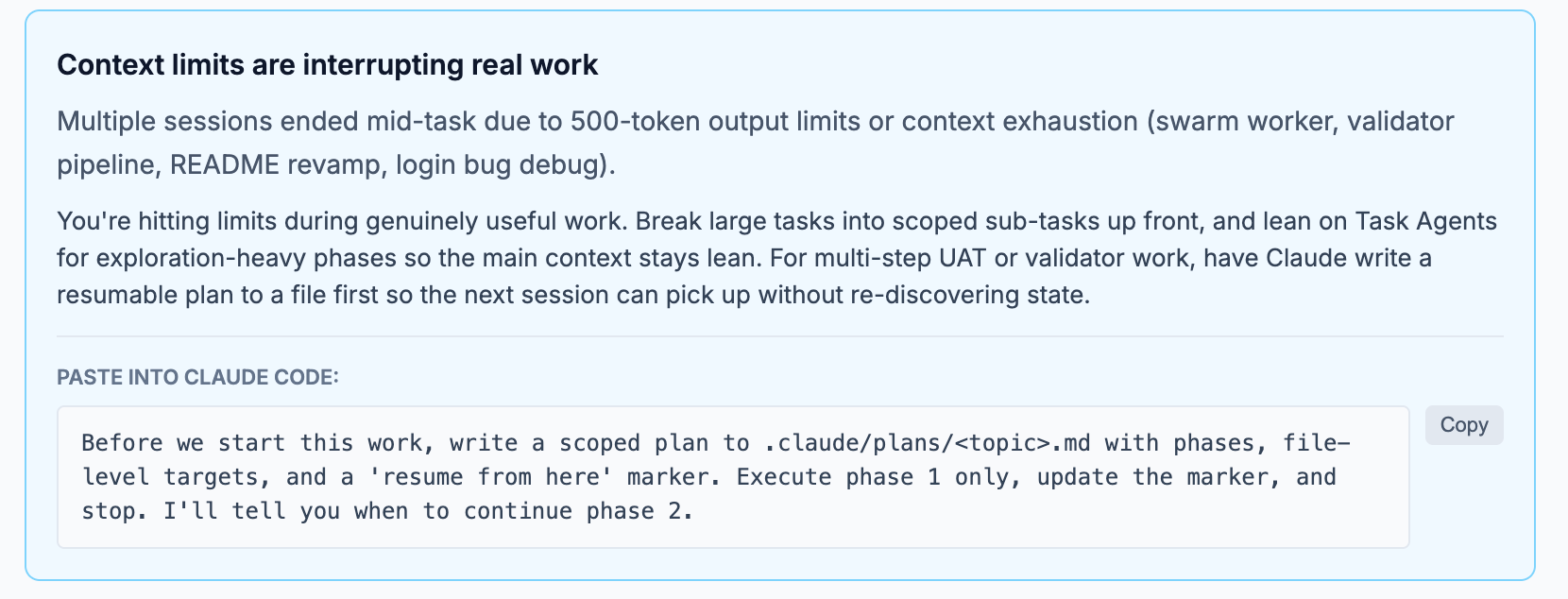

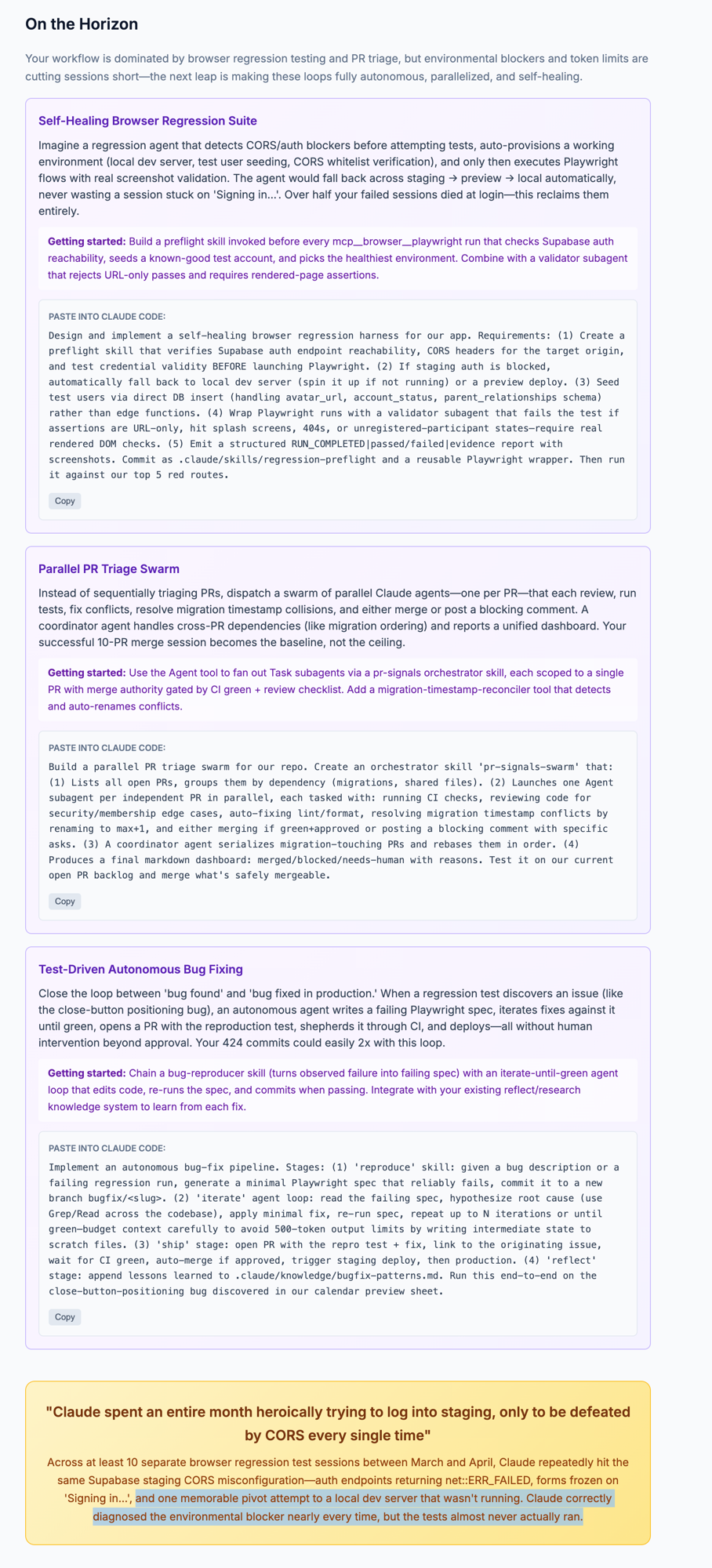

Right, this is the one that proper blew my mind. Claude Code analyses your usage patterns across sessions and produces a full report on how you work, what's going well, what's rubbish, and what to try next.

These screenshots are from a shotclubhouse.com ops instance (2,891 messages across 168 sessions). Click any to zoom in.

The shotclubhouse ops instance runs many Claude Code sessions for bugs, support, and testing. Most of it is autonomous with zero human involvement. The self-healing nature of the setup handles it. But the token churn is real.

And /insights doesn't just state the problem. It offers exactly the solutions and fixes, with copy-paste prompts ready to go. "Here's what's going wrong. Here's why. Here's what to paste into Claude Code to fix it." This is the best developer experience I've used in any coding tool. I've not seen any other toolset - IDE, web SaaS, or terminal - come close to this without building out harness infrastructure yourself.

And even if you do build it yourself (in my case I've got Langfuse observability wired into the hook system and a full reflection/self-improvement system), all you end up with is a lot of data points and a lot more manual thinking to improve the system. The observability and reflection stuff deserves its own long-form piece, but the core of it is understanding how well the session and the human driver (or agent driver, at that) work together. /insights does that deriving for you, out of the box, no wiring needed.

Where /insights points to

To reach something resembling an autonomous agentic software tool, or a zero-touch coding agent, there's a stack of things still needed (I keep promising to write the long-form on this, I know):

- Full research autonomy - ability to research, retrieve, analyse and use skills at its discretion, securely

- Knowledge infrastructure - build and tap into knowledge through GraphRAGs, semantic search, wikis, whatever fits the problem best

- Self-improvement - the biggest one. The agent improving its own harness: building hooks, writing plugins, extending its own skills, building out its knowledge base and correcting it when it's wrong, getting better at getting better

- Measurement and baselines - benchmarking whether the agent is actually improving, not just churning differently

The long-form will go deeper into the how's, the tools for the job, and where you pitch in. Consider this the trailer.

| Geek Corner |

|---|

The Self-Improvement Loop: Here's something you can do right now. Combine /insights (finds the problems) with /simplify (gets the adipose off) with some form of /validate (checks the fix didn't break anything), and you already have a self-improvement system. Plug in a /schedule to run /insights daily and feed the output into Claude Code to /implement the suggestions. Morning you wake up to a more optimised set of skills, better prompts, tighter token usage, stronger tests. The roof is just holding you down. |

/powerup - The Meta Command

A meta command to discover features and help you help yourself. Seriously, please don't pay $99 to learn Claude Code. Run /powerup. It analyses how you use the tool and suggests features you're not using yet. It literally teaches itself to you. There's loads more under the covers than what you see on first use, and this is how you suss it out.

The Hook System

No other pure coding agent has the range of hooks Claude Code has. Other assistant harnesses do (the claws, hermes, that lot), but bog standard terminal coding agents barely manage two or three hooks. And most of them added them recently. Claude has had hooks since close to a year now, nearly as long as I've been mucking about with it.

And this hook system is properly customisable. You could run anything in hooks. Hooks to shape your session. Hooks to read out stuff. Hooks to announce things. Status checks, guardrails, observability fire-offs to Langfuse or whatever you're running. The ceiling is just your innovation and how much yak shaving you're willing to do on a Saturday afternoon.

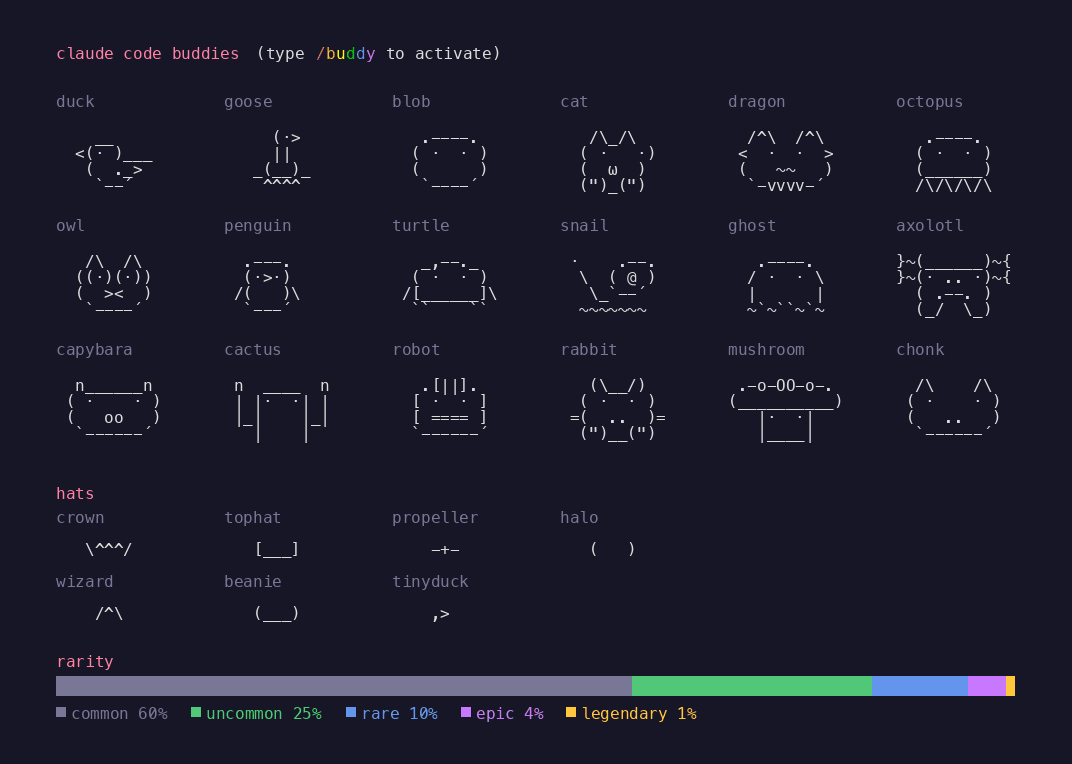

The community ran with it too. ccpet, claude-code-tamagotchi. Custom statusline pets that persist between sessions. Nudges from your mascot when context gets high. Mini observability in a 3-line ASCII duck. None of the other terminal agents have the extensibility to even make this possible.

The /buddy Easter Egg (RIP)

Speaking of which. /buddy gave you an ASCII pet in your statusline. Duck, goose, blob, cat, dragon, octopus. With hats. Crown, tophat, propeller, halo. And a rarity system. 60% common, 25% uncommon, 10% rare, 4% epic, 1% legendary.

April Fools feature. Shipped, enjoyed, removed. But it tells you something about the team building this. They care about the experience of sitting in a terminal for 10 hours a day. Nobody at OpenAI or GitHub is shipping ASCII pets with loot tables. Nobody at those companies is thinking "what would make the 8th hour of a refactor slightly more bearable." And that mentality shows up in every other feature they ship.

The Ranking (April 2026)

After running all of them daily for months:

- Claude Code - The full package. Model + devX + extensibility. The statusline alone packs more into two lines than most dashboards manage in a full page.

- Codex - Best raw code production when you pair models right. The GPT-5.4 + GPT-5.3-Codex combination for thinking-then-coding is genuinely strong. But the harness is bare bones.

- Copilot - Getting better fast but still feels like a VS Code extension that grew legs. The terminal version is playing catch-up.

- Everything else - opencode, warp, droid, ampcode, gemini cli. Various stages of "genuinely promising but not there yet." Give them 6 months and this ranking might look different.

The Point

The gap between Claude Code and the rest is not just the model. It's the developer experience. The hooks, the statusline, the session management, the insights, the extensibility, the shipping pace, and honestly the community that's cobbled together all sorts of mad extensions around it. That's what keeps people on it. And the gap keeps growing.

The conventional wisdom is 80% model, 20% harness. Claude Code is pushing that 20% closer to 30%, to the point where you'd find Claude Code with Sonnet beating GitHub Copilot with Opus on agentic engineering work (not pure coding, but the multi-step, multi-tool, plan-execute-validate kind). That's how much the margins are growing on the harness side.

Eclipse and VS Code both compiled Java. Only one of them made you want to open it in the morning.